Datasets

| Binaries/Code | Datasets | Open Source Software |

FreiHAND Dataset

News: An extended version of this dataset with calibration and multiple-views is released in HanCo.News: Due to ungoing problems with the Codalab evaluation server we have decided to release the evaluation split annotations publicly on this page.

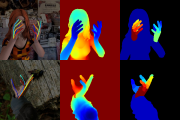

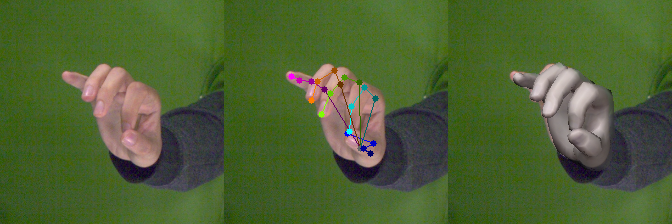

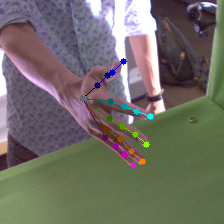

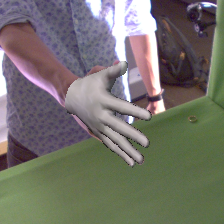

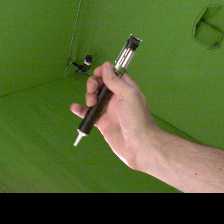

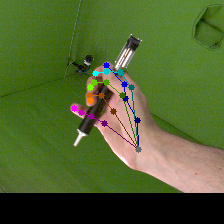

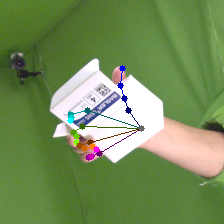

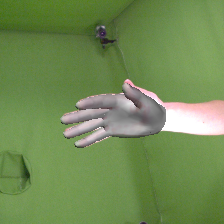

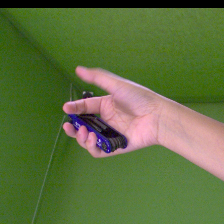

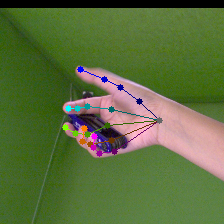

In our recent publication we presented the challenging FreiHAND dataset, a dataset for hand pose and shape estimation from single color image, which can serve both as training and benchmarking dataset for deep learning algorithms. It contains 4*32560 = 130240 training and 3960 evaluation samples. Each training sample provides:

| - | RGB image (224x224 pixels) | |

| - | Hand segmentation mask (224x224 pixels) | |

| - | Intrinsic camera matrix K | |

| - | Hand scale (metric length of a reference bone) | |

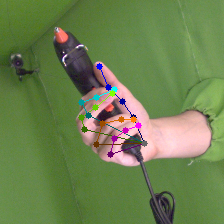

| - | 3D keypoint annotation for 21 Hand Keypoints | |

| - | 3D shape annotation |

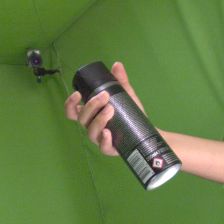

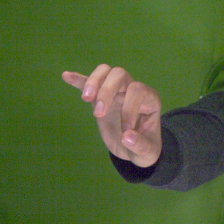

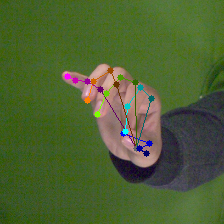

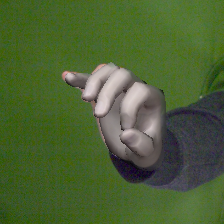

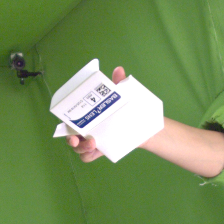

Examples

| RGB | RGB + Keypoints | RGB + Shape | RGB | RGB + Keypoints | RGB + Shape | |

|---|---|---|---|---|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Terms of use

This dataset is provided for research purposes only and without any warranty. Any commercial use is prohibited. If you use the dataset or parts of it in your research, you must cite the respective paper.

@InProceedings{Freihand2019,

author = {Christian Zimmermann, Duygu Ceylan, Jimei Yang, Bryan Russell, Max Argus and Thomas Brox},

title = {FreiHAND: A Dataset for Markerless Capture of Hand Pose and Shape from Single RGB Images},

booktitle = {IEEE International Conference on Computer Vision (ICCV)},

year = {2019},

url = "https://lmb.informatik.uni-freiburg.de/projects/freihand/"

}

|

Dataset

For examples how to work with the dataset visit the accompanying Github repository.Download FreiHAND Dataset v2 (3.7GB)

Download FreiHAND Dataset v2 - Evaluation set with annotations (724MB)